The move toward data analytics applied to broker supervision might seem on face value to result in objective identification of “high risk” financial advisers. However, once the technical jargon is stripped away, algorithms are just opinions embedded in mathematics.

Algorithms are created by humans and can reinforce human prejudices. Researchers have noted that big data used in algorithms can inherit the prejudices of prior decision-makers or reflect the widespread biases that persist in society at large. If humans made discriminatory or even inadvertently discriminatory choices in the materials used to develop the algorithm, then the algorithm may produce discriminatory outcomes.

FINRA’s High-Risk Representative Algorithm

FINRA released a video interview with Mike Rufino, Executive Vice President and Head of FINRA Member Regulation-Sales Practice, about FINRA’s High-Risk Registered Representative Program. Mr. Rufino discussed some of the criteria and the methodology FINRA uses to identify and monitor High Risk Registered Representatives. He listed the following criteria as inputs into the High-Risk Representative algorithm/model:

Model Criteria

- Association with Firms, especially highly disciplined Firms

- Length of time a Rep has been at a ‘problem’ Firm

- How many problem Firms a Rep has been associated with (see WSJ story on Rep “cockroaching“)

- Licensing exams- test failures and expired licenses

- Investor Harm Disclosures

- Age of Disclosure

- Number of Disclosures

- Types of Disclosures- arbitrations, customer complaints, U4 and U5 filings

- Prior FINRA exam/review of the Rep

Mr. Rufino stated that FINRA uses a three-pronged to approach to identify High Risk Brokers. The first prong is the predictive model using the above weighted criteria to come up with a “numeric score for all our brokers” which is then subject to a qualitative review by staff. Secondly, Mr. Rufino said that FINRA looks at the “sheer number of disclosures that an individual may have” in order to determine if there is a pattern or issue FINRA should be concerned with. The final prong is to identify individuals working in the industry during a bar/ban appeal.

How Bias is Embedded in Algorithms

A 2016 White House report titled Big Data: A Report on Algorithmic Systems, Opportunity, and Civils Rights identified two categories of how discriminatory effects can be introduced into algorithms 1) data used as inputs to an algorithm; and 2) the inner workings of the algorithm itself.

While FINRA has provided some bullet-points as to the inputs into the High-Risk model, the full algorithm is unknown. The public does not know all of the inputs, and we don’t know how the inputs are weighted. Nor can we reverse engineer the model since FINRA declined to make public the results of the algorithm.

Biased Inputs?

The WH Big Data study reported that flawed “data sets that lack information or disproportionately represent certain populations” can result in skewed algorithms which effectively encode discrimination.

The memorably-named study When Harry Fired Sally: The Double Standard in Punishing Misconduct found that financial services firms “are more likely to blame women for problems, since a disproportionate share of misconduct complaints is initiated by the firm, instead of customers or regulators.”1 The over-representation of women in firm-initiated misconduct complaints presumably impacts FINRA’s predictive model numeric score which relies on Investor Harm Disclosures and could also impact the ‘second prong’ where FINRA looks at the number of disclosures.

The researchers also found that women at financial services firms are 20% more likely to be fired by their firm after a customer complaint than their male peers. FINRA’s High-Risk Representative algorithm also appears to incorporate gender-biased U5 termination filings which could lead to the adverse and inaccurate consequence of an over-representation of women being classified as “High-Risk” by the model. It is important to note that the Harry Fired Sally study found that female advisers engage in less costly misconduct and have a lower propensity towards repeat offenses.

If a female financial adviser is able to find new employment in the industry after a termination (women are 30% less likely to find new jobs relative to male adviser), the employer will more often be a “riskier” firm. The Market for Financial Adviser Misconduct found that firms that hire more advisers with misconduct records are also less likely to fire advisers for new misconduct.2 Given that women are fired at a higher rate than their male peers, it follows that women will disproportionately end up at riskier firms after a termination, and once again be over-represented in FINRA’s model due to the inclusion of an input described as “association with highly disciplined firms.”

Biased Outputs? Opaque and Uncontestable Models

Since FINRA’s High-Risk Representative algorithm appears to rely on gender-biased data inputs including disparate terminations and disproportionate misconduct complaints, it is possible that FINRA’s model is perpetuating harmful discrimination against women. The lack of transparency into FINRA’s algorithm should give one pause since the algorithm’s outputs are not testable. Without testable outputs we have no way of knowing if biased inputs are resulting in biased outputs, such as over-representation of women on the “High-Risk” broker list.

As Cathy O’Neil wrote in Weapons of Math Destruction, models are opaque, unregulated and uncontestable, even when they are wrong.

___________________________________________________________________________________________

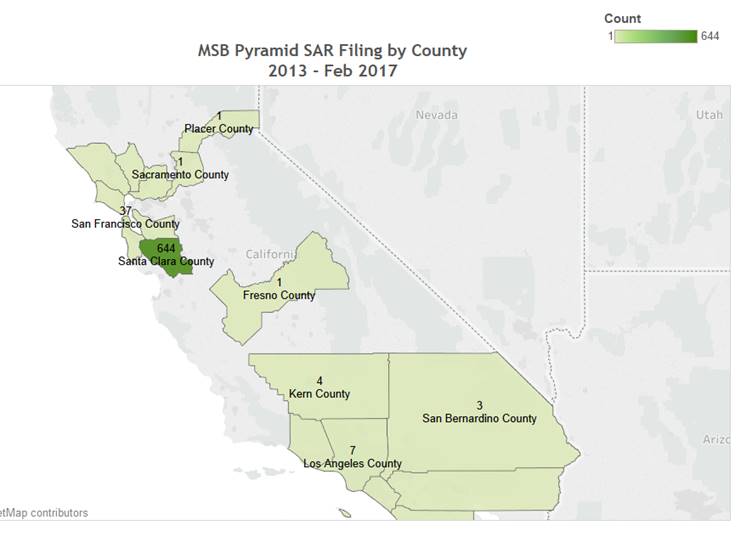

See my article on Uncovering Bias in AML Algorithms to learn more about the potential for bias in regulatory algorithms.

See my article on the Links between FINRA’s High Risk Rep Program & Expungement

1 “When Harry Fired Sally: The Double Standard in Punishing Misconduct,” Mark L. Egan, Gregor Matvos & Amit Seru.

2 “The Market for Financial Adviser Misconduct,” Mark L. Egan, Gregor Matvos & Amit Seru.