In my upcoming article titled “Unmasking Bias in AML Algorithms” in the September/October edition of ACAMS Today, I explore the potential for bias (racial, ethnic, religious, gender, etc.) in Anti-Money Laundering algorithms used by financial institutions to detect potential suspicious activity.

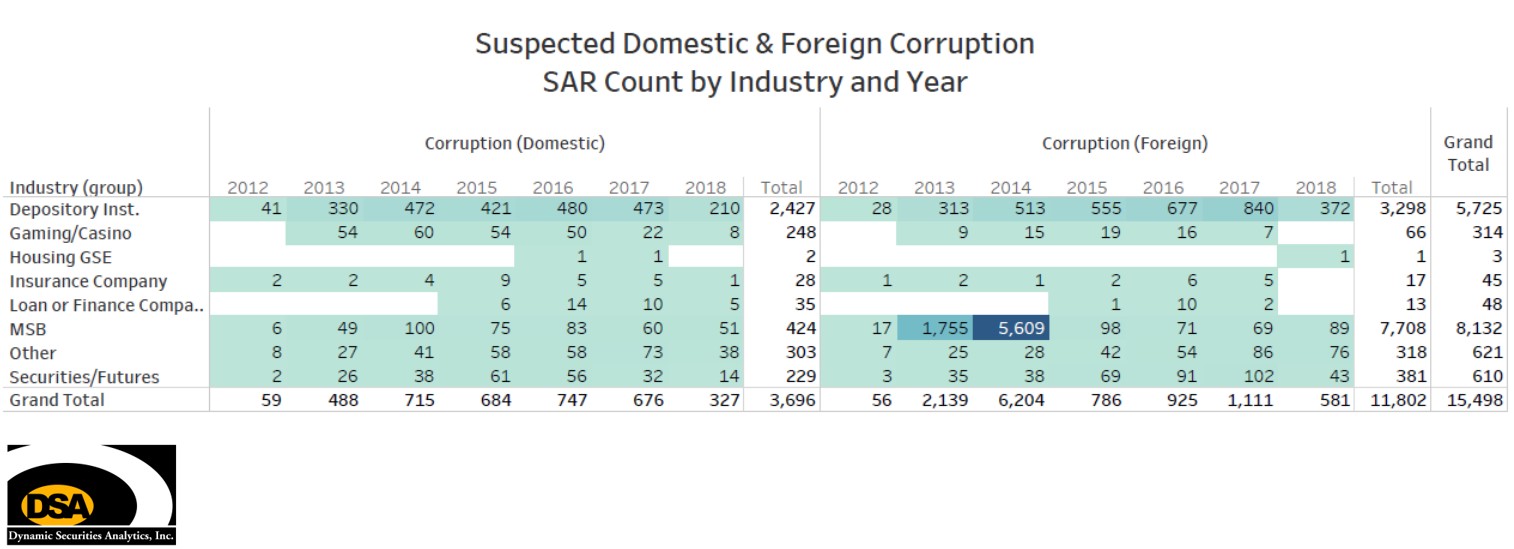

The most serious outcome of activity flagged by an AML algorithm results in the financial institution filing a suspicious activity report (SAR) with FinCEN. In 2015, FinCEN received over 1.8 million SARs naming customers and other individuals. FinCEN’s SAR database is available to more than 9,000 law enforcement agencies and regulators who query the system more that 30,000 times each day.1 Despite being a leading source of investigative leads, FinCEN has never conducted a study on whether bias occurs within SAR filings.2

In response to a Freedom of Information Act request filed by DSA, a FinCEN Disclosure Officer reported:

FinCEN does not collect racial, religious, or ethnic demographics on SARs.

FinCEN and financial institutions should read the Bloomberg article (and inspiration for this post’s title) “Amazon Doesn’t Consider the Race of its Customers. Should it?” to further understand the potential for disparate impact through algorithms.

__________________________

1 Remarks by Jennifer Shasky Calvery, FinCEN Director at Predictive Analytics World for Government, October 13, 2015,

2 2016-482 Jimenez FOIA Response Letter in response to Freedom of Information Act Request filed by Dynamic Securities Analytics, Inc.